The term Artificial Intelligence (AI) was first coined by John McCarthy in 1956, and further published in 1959 entitled Programs with Common Sense in the Proceedings of the Teddington Conference on the Mechanization of Thought Processes. He discussed artificial intelligence in terms of “thinking machines”. John McCarthy was known as the “father of AI”.

According to the English Oxford Dictionary, artificial intelligence means: “The theory and development of computer systems able to perform tasks normally requiring human intelligence, such as visual perception, speech recognition, decision making and translation between languages”. On the other hand, the Encyclopedia Britannica refers to AI as “the ability of a digital computer or computer-controlled robot to perform tasks commonly associated with human beings”. It is the exploration of the mechanism of human intelligence through computer modelling – modelling the decision-making process; it uses a mix of parameters, historical data and models to detect patterns that can help in making decisions.

Data is the fuel for AI!

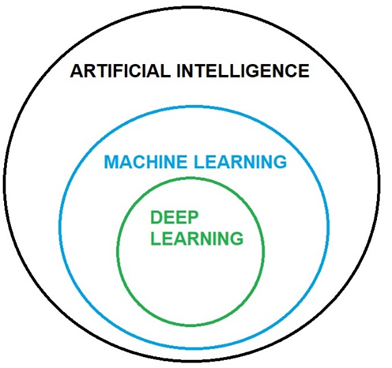

AI, machine learning, and deep learning are related but they aren’t synonyms. So, before moving further in the applications and classifications of AI, let us spend some time on the definitions of each one of them.

Machine Learning — this is the science concerned with how to develop computer programs that automatically improve with experience, in other words it is the science of learning (Mitchell, 1997), where different techniques can be applied. With machine learning, it is possible to systematically understand what model performs better and under what circumstances.

Deep learning – this is a technique or a subset of machine learning that is derived from artificial neural networks, in other words, it is a technique that allows computational models of multiple processing layers to learn representations of data with multiple levels of abstraction (LeCun et al., 2015). This computational “intelligence” has enabled applications in the health industry that are as efficient as those conducted by a trained physician and in several other activities such as in agriculture, medicine, laboratory, physics, and so on.

There are two pre-requisites for deep learning:

1. High throughput data (large amount of data, aka Big Data)

2. Massive Computing Power and memory (more specifically computer graphics processing unit (GPU), central processing unit (CPU), Random-access memory (RAM)).

A Brief History of AI

The development of AI is correlated to scientists developing some understanding of the human brain. This is because a general idea of the conception of thought, followed by advances in neurological research, were necessary for creating a framework for understanding the brain – and without understanding human intelligence, there is no framework for engineering an artificial intelligence.

In 1943, Warren S. McCulloch and Walter Pitts published work about artificial neurons and how to potentially connect them such that they could complete simple logic functions. This early work underpins what we now refer to as “neural networks”, one of the core concepts in modern AI technology. Seven years later, mathematician Alan Turing developed what would later be known as the “Turing Test”, a test designed to assess the intelligence aspect of an artificial intelligence. In this test, humans and the AI answer questions; an AI is said to have passed the test if it successfully passes as a human. There are many variations of the Turing test, and many widespread criticisms, including:

• Does mimicry equal intelligence?

• Is it truly desirable to have an AI truly mimic a human?

The 1950s were a period of remarkable growth in AI, particularly considering the difference in computing power between 1950 and the 2020s.

In 1951, the first AI was a checker-playing program developed at the University of Oxford by Christopher Strachey. The same year introduced the first neural network, built by Marvin Minsky and Dean Edmunds. This early network relied on vacuum tubes and was designed to simulate a network of 40 neurons. By 1960, three known neural networks were constructed in the West, alongside advances in computer programming. This included Arthur Samuel’s checkers-playing computer, able to learn as it went along, in 1952. By 1965, Joseph Weizenbaum had created ELIZA, an important step in the development of human-facing machines, and one of the earlies known natural language processing programs. Although the program was built to show that computer-person communication was superficial, ELIZA was capable of maintaining English dialogue on any topic due to its pattern recognition capabilities. ELIZA is also an important part of AI history because it marks one of the earliest known instances of people attributing feelings to a computer based on their interaction.

By 1970, scientists in Japan had built a human-like robot which could control its own limbs and actions; in 1980, Japanese scientists had created another human-like robot, this time a replica of a musician, could communicate with people and read and play music. Research continued swiftly on, and in 1988 researchers at IBM started the shift from existing machine translations of language to focussed machine learning work to develop more faithful translations from English to French and French to English. This work underlies some of the machine learning work still used today.

The late 1990s through the 2000s were another period of rapid growth in AI, both for scientists and the general population as AI gained popularity and traction in the news. In 1997, a type of recurrent neural network known as Long Short-Term Memory, or LSTM, was proposed – LSTM underpins current speech and handwriting recognition programs. The same year, IBM’s Deep Blue, built by Feng-hsiung Hsu and his team, beat chess world champion Garry Kasparov. By 2000, a robot named ASIMO was waiting tables in Japan while Google started exploring driverless cars and the program StatsMonkey started writing articles about sports news. In 2015 AlphaGo beat European Go Champion Fan Hui – all tasks achieved without human help.

Of course, this is just a brief history of AI, containing some highlights that can easily be traced to the development of virtual assistants such as Siri and Alexa and the self-driving trucks making their way into the freight industry in the US.

Theory of Mind

Theory of mind is the term used to describe the conceptualisation of thought and mind. It can be argued that all higher order animals have some type of mind – the question is, are they all aware of it? Does a dog have a sense of self? If so, is the dog aware of itself as a thinking being? And can it recognise the idea of other dogs as thinking beings?

Animals with theory of mind can recognise that they, themselves have a mind and that others do too. This means that they can recognise the idea of “self” and “other”. This idea of recognising more than one individual existence underpins how humans communicate (language) and take on other perspectives (perspective-taking, empathy). It is a mechanism we use to explain behaviour in ourselves and others, and for predicting ways people will act. These predictions may change based on experience.

We can see evidence of predictions and changes in the way infants act. When an infant does something cute or amusing – say, rolling a ball toward their parents – the parents may smile and acknowledge this. The infant notes this response positively.

Types of AI systems

TYPES OF ARTIFICIAL INTELLIGENCE

Artificial intelligence is not a new concept; it has been around for over fifty years as you now know, but it has received more attention in the last two decades through voice and face recognition applications used in smart phones and social media. Our perception of what it is, how it might be used, and the different types of AI are “fluid concepts”, involving notions of analogy and fluidity.

AI can be classified into different types or categories:

One way of classifying AI is based on its similarities to the human mind and its ability to respond to stimuli. Based on this classification system, there are 4 categories or AI-enabled systems: reactive AI, limited memory, theory of mind-based AI, and self-aware AI.

• Reactive AI – These machines are the simplest machines; they have no memory, they simply react (respond) to stimuli. Consequently, they have no ability to “learn” since they do not use previous experiences (which would be stored in its memory) to predict future actions. A well-known example of this type of AI is IBM’s “Deep Blue” the computer that beat the world chess champion chess in 1997, by researching all the millions of possible future moves without “understanding” the game.

• Limited Memory AI – This type of AI is built to have time constraints. Limited memory AI are machines with limited memory, where data is stored; in other words, they can be “trained” by historical data, past experiences stored in the memory that forms a reference model to predict future situations. Most of the applications we use in our daily lives fall into the category “limited memory AI”. Here is a list with a few examples: self-driving vehicles, virtual assistants, smart maps, chatbots, face or image recognition, translators, voice recognition, etc. This type of AI is heavily dependent on pattern recognition.

• Theory of Mind AI — “Theory of mind” is how scientists refer to the varying ways in which the human mind perceives and thinks. In terms of AI, this concept involves complex systems perceiving both actions and feelings of people (including human emotions), also known as artificial emotional intelligence, then changing actions or behaviours accordingly. Facial expressions or movements of eyes or hands for instance, may be able to be detected and correlated to various emotions. It is the next level of AI in which research is being done alongside advances in other branches of AI and neuroscience data.

• Self-Aware AI – This type of AI would, in theory, learn and internalise emotions and other human traits in a way that is largely indistinguishable from human self-awareness. Whether or not this type of AI is a realistic or desirable reality is very much debateable. This category of AI may be decades or centuries away from coming into fruition and raises a series of ethical questions. The consequences of the evolution of self-aware machines can be positive or extremely negative, culminating with the end of humanity.

There is an alternate system of classification for AI which uses more technical jargon. This system classifies AI into three groups: Artificial Narrow Intelligence (ANI), Artificial General Intelligence (AGI), and Artificial Superintelligence (ASI) (Joshi, 2019).

• Artificial Narrow Intelligence (ANI): This category encompasses all AI currently available. These systems perform a specific task autonomously using human-like capabilities. They cannot do more than what they are programmed to do, that is the reason why they are called artificial narrow intelligence.

• Artificial General Intelligence (AGI): This category represents those machines that can learn, understand and function completely like a human being. They are expected to cut down on time needed for training by creating multiple competencies and form connections and generalisations across domains. AI experts have estimated the development of AGI to be done by 2060.

• Artificial Superintelligence (ASI): This is the type of AI that will be the most intelligent form of intelligence in the planet. It is a type of intelligence that will be capable of replicating the intelligence of human beings, due to an extraordinary memory, faster data processing and analysis, decision-making abilities.

By John Mason